I have installed R and SparkR on my Hadoop/Spark cluster. That is described in this post. I have also installed Apache Zeppelin with R to use SparkR with Zeppelin (here).

So far, I can offer my users SparkR through CLI and Apache Zeppelin. But they all want one interface – RStudio. This post describes how to install RStudio Server and configure it to work with Apache Spark.

On my cluster, I am running Apache Spark 1.6.0, manually installed (installation process). Underneath is a multinode Hadoop cluster from Hortonworks.

RStudio Server is installed on one client node in the cluster:

- Update the Ubuntu system

sudo apt-get update

- Download the repository file (make sure you are downloading RStudio Server, not the client!)

sudo wget https://download2.rstudio.org/rstudio-server-0.99.893-amd64.deb

- Install gdebi (about gdebi)

sudo apt-get install gdebi-core -y

- Install package libjpeg62

sudo apt-get install libjpeg62 -y

- In case you get the following error

You might want to run ‘apt-get -f install’ to correct these:

The following packages have unmet dependencies:

rstudio : Depends: libgstreamer0.10-0 but it is not going to be installed Depends: libgstreamer-plugins-base0.10-0 but it is not going to be installed

E: Unmet dependencies. Try ‘apt-get -f install’ with no packages (or specify a solution).Run:

sudo apt-get -f install

- Install RStudio Server

sudo gdebi rstudio-server-0.99.893-amd64.deb

- During the installation, the following question outputs. Type “y” and press Enter.

RStudio is a set of integrated tools designed to help you be more productive with R. It includes a console, syntax-highlighting editor that supports direct code execution, as well as tools for plotting, history, and workspace management.

Do you want to install the software package? [y/N]: - Find your path to $SPARK_HOME

echo $SPARK_HOME

- Setting environment variable in Rprofile.site

Location of the file should be /usr/lib/R/etc/Rprofile.site. Open the Rprofile.site file and append the following line to it (or whatever your home to Spark is)Sys.setenv(SPARK_HOME="/usr/apache/spark-1.6.0-bin-hadoop2.6")

- Restart RStudio

sudo rstudio-server restart

- RStudio with Spark is now installed and can be accessed on

- Log in with one Unix user (if you do not have one run sudo adduser user1). User cannot be root or have ID lower than 100.

- Load library SparkR

library(SparkR, lib.loc = c(file.path(Sys.getenv("SPARK_HOME"), "R", "lib"))) - SparkContext environment values (used for parameter sparkEnvir when creating SparkContext in the next step). These can be adjusted according to the cluster and user needs.

spark_env = list('spark.executor.memory' = '4g', 'spark.executor.instances' = '4', 'spark.executor.cores' = '4', 'spark.driver.memory' = '4g') - Creating SparkContext

sc <- sparkR.init(master = "yarn-client", appName = "RStudio", sparkEnvir = spark_env, sparkPackages="com.databricks:spark-csv_2.10:1.4.0")

- Creating an sqlConext

sqlContext <- sparkRSQL.init(sc)

- In case the SparkContext has to be initialized all over again, stop it first. Then repeat the last two steps.

sparkR.stop()

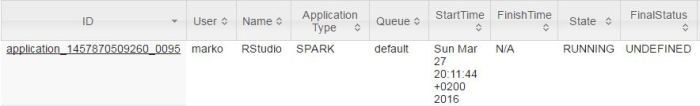

In YARN Resource Manager Console the running application can be controlled:

SparkR in RStudio is now ready for use. In order to get a better understanding of how SparkR works with R, check this post: DataFrame vs data.frame.