For Spark Context to run, some ports are used. Most of them are randomly chosen which makes it difficult to control them. This post describes how I am controlling Spark’s ports.

In my clusters, some nodes are dedicated client nodes, which means the users can access them, they can store files under their respective home directory (defining home on an attached volume is described here), and run jobs on it.

The Spark jobs can be run in different ways, from different interfaces – Command Line Interface, Zeppelin, RStudio…

Links to Spark installation and configuration

Installing Apache Spark 1.6.0 on a multinode cluster

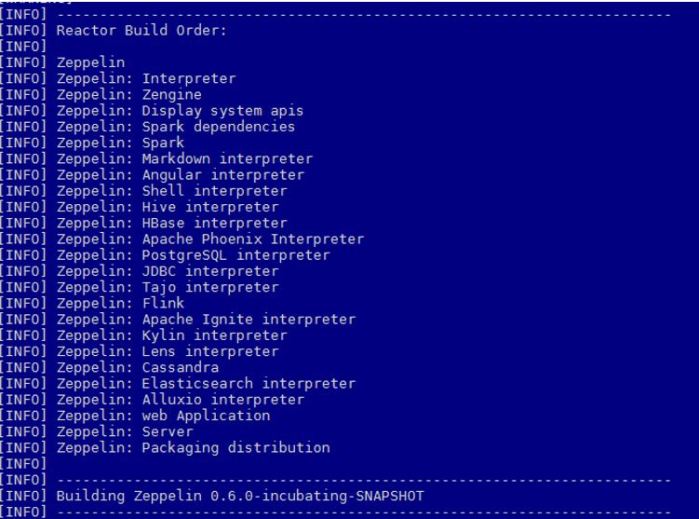

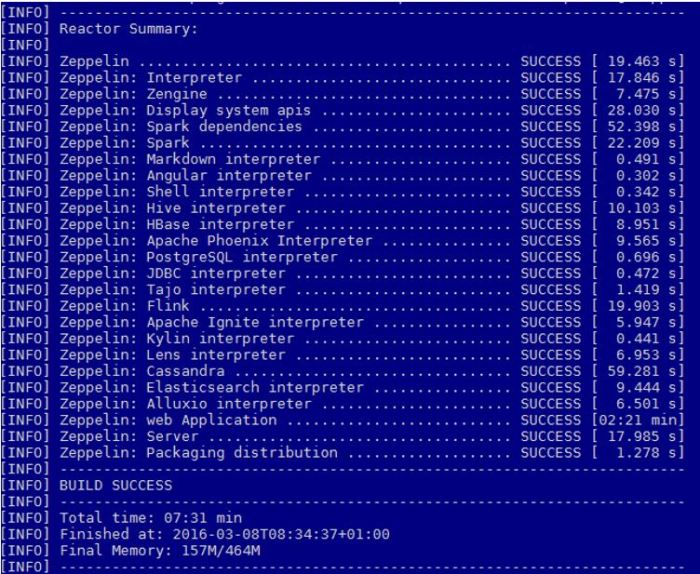

Building Apache Zeppelin 0.6.0 on Spark 1.5.2 in a cluster mode

Building Zeppelin-With-R on Spark and Zeppelin

What Spark Documentation says

Spark UI

Spark User Interface, which shows application’s dashboard, has the default port of 4040 (link). Property name is

spark.ui.port

When submitting a new Spark Context, 4040 is attempted to be used. If this port is taken, 4041 will be tried, if this one is taken, 4042 is tried and so on, until an available port is found (or maximum attempts are met).

If the attempt is unsuccessful, the log is going to display a WARN and attempt the next port. Example follows:

WARN Utils: Service ‘SparkUI’ could not bind on port 4040. Attempting port 4041.

INFO Utils: Successfully started service ‘SparkUI’ on port 4041.

INFO SparkUI: Started SparkUI at http://client-server:4041

According to the log, the Spark UI is now listening on port 4041.

Not much randomizing for this port. This is not the case for ports in the next chapter.

Networking

Looking at the documentation about Networking in Spark 1.6.x, this post is focusing on the 6 properties that have default value random in the following picture:

When Spark Context is in the process of creation these receive random values.

spark.blockManager.port spark.broadcast.port spark.driver.port spark.executor.port spark.fileserver.port spark.replClassServer.port

These are the properties that should be controlled. They can be controlled in different ways, depending on how the job is run.

Scenarios and solutions

If you do not care about the values assigned to these properties then no further steps are needed..

Configuring ports in spark-defaults.conf

If you are running one Spark application per node (for example: submitting python scripts by using spark-submit), you might want to define the properties in the $SPARK_HOME/conf/spark-defaults.conf. Below is an example of what should be added to the configuration file.

spark.blockManager.port 38000 spark.broadcast.port 38001 spark.driver.port 38002 spark.executor.port 38003 spark.fileserver.port 38004 spark.replClassServer.port 38005

If a test is run, for example spark-submit test.py, the Spark UI is by default 4040 and the above mentioned ports are used.

Running the following command

sudo netstat -tulpn | grep 3800

Returns the following output:

tcp6 0 0 :::38000 :::* LISTEN 25300/java

tcp6 0 0 10.0.173.225:38002 :::* LISTEN 25300/java

tcp6 0 0 10.0.173.225:38003 :::* LISTEN 25300/java

tcp6 0 0 :::38004 :::* LISTEN 25300/java

tcp6 0 0 :::38005 :::* LISTEN 25300/java

Configuring ports directly in a script

In my case, different users would like to use different ways to run Spark applications. Here is an example of how ports are configured through a python script.

"""Pi-estimation.py"""

from random import randint

from pyspark.context import SparkContext

from pyspark.conf import SparkConf

def sample(p):

x, y = randint(0,1), randint(0,1)

print(x)

print(y)

return 1 if x*x + y*y < 1 else 0

conf = SparkConf()

conf.setMaster("yarn-client")

conf.setAppName("Pi")

conf.set("spark.ui.port", "4042")

conf.set("spark.blockManager.port", "38020")

conf.set("spark.broadcast.port", "38021")

conf.set("spark.driver.port", "38022")

conf.set("spark.executor.port", "38023")

conf.set("spark.fileserver.port", "38024")

conf.set("spark.replClassServer.port", "38025")

conf.set("spark.driver.memory", "4g")

conf.set("spark.executor.memory", "4g")

sc = SparkContext(conf=conf)

NUM_SAMPLES = randint(5000000, 100000000)

count = sc.parallelize(xrange(0, NUM_SAMPLES)).map(sample) \

.reduce(lambda a, b: a + b)

print("NUM_SAMPLES is %i" % NUM_SAMPLES)

print "Pi is roughly %f" % (4.0 * count / NUM_SAMPLES)

(The above Pi estimation is a Spark example that comes with Spark installation)

The property values in the script run over the properties in the spark-defaults.conf file. For the runtime of this script port 4042 and ports 38020-38025 are used.

If netstat command is run again for all ports that start with 380

sudo netstat -tulpn | grep 380

The following output is shown:

tcp6 0 0 :::38000 :::* LISTEN 25300/java

tcp6 0 0 10.0.173.225:38002 :::* LISTEN 25300/java

tcp6 0 0 10.0.173.225:38003 :::* LISTEN 25300/java

tcp6 0 0 :::38004 :::* LISTEN 25300/java

tcp6 0 0 :::38005 :::* LISTEN 25300/java

tcp6 0 0 :::38020 :::* LISTEN 27280/java

tcp6 0 0 10.0.173.225:38022 :::* LISTEN 27280/java

tcp6 0 0 10.0.173.225:38023 :::* LISTEN 27280/java

tcp6 0 0 :::38024 :::* LISTEN 27280/java

2 processes are running one separate Spark application each on ports that were defined beforehand.

Configuring ports in Zeppelin

Since my users use Apache Zeppelin, similar network management had to be done there. Zeppelin is also sending jobs to Spark Context through spark-submit command. That means that the properties can be configured in the same way. This time through an interpreter in Zeppelin:

Choosing menu Interpreter and choosing spark interpreter will get you there. Now it is all about adding new properties and respective values. Do not forget to click on the plus when you are ready to add a new property.

At the very end, save everything and restart the spark interpreter.

Below is an example of how this is done:

Next time a Spark context is created in Zeppelin, the ports will be taken into account.

Conclusion

This can be useful if multiple users are running Spark applications on one machine and have separate Spark Contexts.

In case of Zeppelin, this comes in handy when one Zeppelin instance is deployed per user.