Automation is the key word when it comes to using cloud services. Pay-as-you-go is the philosophy behind it.

In this post, I explain how I provision Apache Spark cluster on Amazon. The configuration of the cluster is done prior to the provisioning using the Jinja2 file templates. The cluster, once provisioning is completed, is therefor ready to use immediately.

One of the points with automation is to make data scientists more independant of data engineers: data engineer builds the solution and data scientist uses it without having the need for engineering experience.

In this case, the data scientist hast to configure the cluster using the YAML file and prepare a GitHub repository.

There are two ways of using this solution:

- A long-live Spark cluster

Spark cluster serves as a solution for running various jobs. The cluster is always available. - One-time job execution

Spark cluster is provisioned for a specific job which is executed and then the cluster is destroyed. Data Scientist is responsible for data input and data storage in the code (example).

Technologies and services

- AWS EC2 (Centos7)

- Terraform for provisioning the infrastructure in AWS

- Consul for cluster’s configuration settings

- Ansible for software installation on the cluster

- GitHub for version control

- Docker as test and development environment

- Powershell for running Docker and provision

- Visual Studio Code for software development, running Powershell

In order to use a service like EC2 in AWS, the Virtual Private Cloud must be established. This is something I have automized using Terraform and Consul and described here. This provision is a “long-live” provision since VPC has practically no cost.

Prerequisites

I will not go into details of how to install all the technologies and services from the list. However, this GitHub repository does build a Docker container with Consul and latest Terraform. Consul in the Docker is an agent which connects to a global Consul server in Amazon. Documenting the global Consul is on my TO-DO list.

I suggest investing some time and creating a Consul with connection to your own GitHub repository that stores the configuration.

Repository on GitHub

The repository can be found at this address.

Repository Structure

There are two modules used in this project: instance and provision-spark. The module instance is pure Terraform code and does the provisioning of the instances (Spark’s master and workers) in the AWS. The output (DNS and IP addresses) of this module is the input for the module provision-spark which is more complex. It is written in Terraform, Ansible and Jinja2.

Ansible Roles

Below is the structure of the Ansible part of the module.

Roles prereq and spark are applied to all instances. The prereq role takes care of the prerequisites (java, anaconda) and the spark role downloads and installs Spark, and creates Linux objects needed for Spark to work. The start_spark_master applies only to the master instance and start_spark_workers to the worker instances. The role execute_on_spark automatically executes a job on Spark cluster (more on that later).

The path to the YAML file that executes the roles is available here.

Cluster Configuration

Cluster is configured in YAML format and the configuration is sent to the global Consul server. One configuration block servers one cluster. Example for cluster lr_iris can be found here.

Running the code

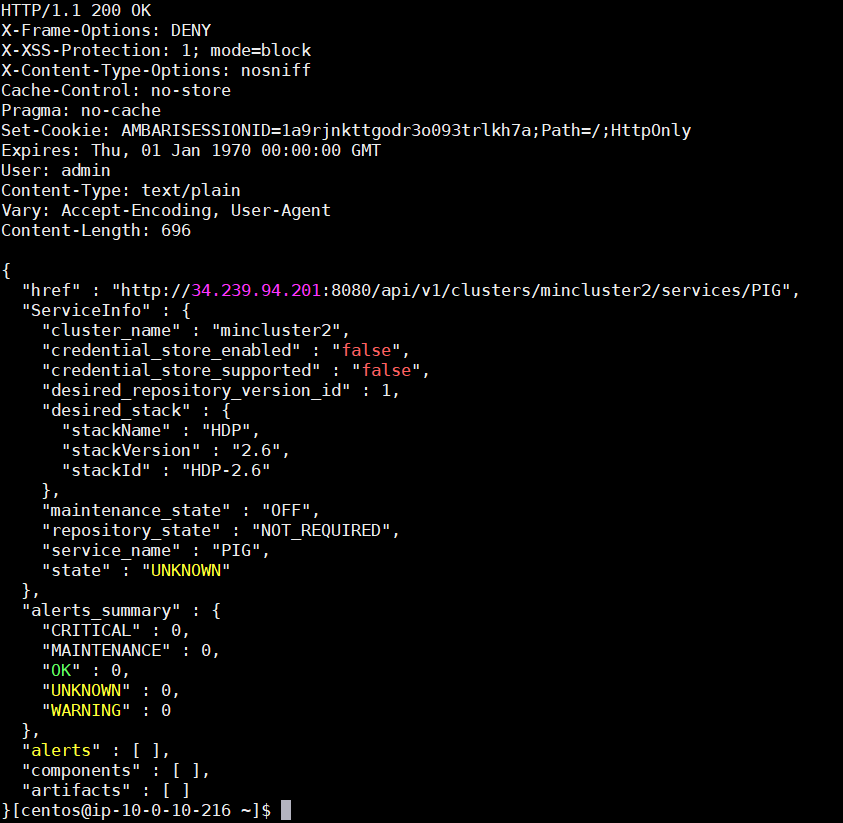

Provisioning starts in module provision-spark where the line

terraform apply -auto-approvestarts provisioning the cluster. Configuration is taken from Consul to populate the variables in Terraform. Ansible (inventory) file is created by Terraform after the EC2 instances are launched and started. After the inventory file is created, Terraform executes the spark.yml file and the rest is in the hands of Ansible. If everything goes well, the output is similar to the following:

This is a Terraform output as defined in the output.tf file.

The Spark cluster is now ready.

View in AWS Console

The instances in the Spark cluster look like this in AWS console:

Spark as a Service

Spark services running on master and workers are handled as services using systemctl. The services are created and started using Ansible: Spark workers start a service called spark-worker whose Ansible code can be seen here.

Spark Master has two services: spark-master and sparkhs (Spark History Server). Ansible code for both services is here.

Spark Master

Checking if Spark Master is available by using the public IP address and port 8080 should return an interface similar to this one:

Five workers were set up in the configuration file. This means we have a cluster with six instances: one is the Spark Master, the other five are the workers.

Spark History Server

Spark History Server, just like Spark Master become significant if long-live cluster is used. It helps monitoring and debugging the jobs (applications in Spark language).

Spark History Server can be reached at port 18080 on Spark Master.

Above is an example of an application that was executed on the Spark cluster. Note Event log directory – it is pointing to a local directory which will be removed once the cluster is destroyed. This is not an issue if we are running a long-live cluster, but if we want to keep logs for one-time clusters it is advised to store the logs externally. In this case, since Amazon is used, storing to S3 would be the best option.

Automatic Code Execution

The Spark cluster is now ready to use. Full automation process is achieved when the Spark code is automatically executed from the Terraform code once the cluster is available. In the repository, one of the Ansible roles is execute_on_spark which executes either a Python or a Scala code on the provisioned Spark cluster.

Which Spark code will be executed depends on the configuration in the YAML file. A path to a GitHub repository is part of the configuration and that repository is cloned to the Spark Master and executed.

An example mentioned above can be found here. The example is one of Hello Worlds in data science – Logistic Regression on Iris dataset. In this case, the Data Scientist is responsible for the input data and storing the results outside of the Spark cluster.

input_file = "s3a://hdp-hive-s3/test/iris.csv"

output_dir = "s3a://hdp-hive-s3/test/git_iris_out"When the cluster is ready, the repository is cloned and the code is executed. Inside the code, the Data Scientist defines input and output. In this case, object storage S3 is used to do a one-time job, save the results and the Spark cluster is of no use anymore.